Ba-Hien Tran

I am currently a Senior Research Scientist in Machine Learning at Huawei Paris Research Center.

I obtained my PhD in machine learning from Sorbonne University under the supervision of Prof. Maurizio Filippone, where I was honored with the Best PhD Thesis Award. Prior to this, I earned my Diplôme d’Ingénieur in computer science from Télécom Paris, Polytechnic Institute of Paris, graduating with highest distinction.

My research operates at the intersection of probabilistic modeling and deep learning, with a strong emphasis on developing extremely efficient AI models. I am deeply committed to advancing both academic research and industrial innovation. My work is regularly published in top-tier machine learning venues—including JMLR, NeurIPS, ICML, ICLR, AISTATS—mostly as the first author. Additionally, I have invented over 30 filed patents, primarily serving as the principal inventor, with many of these recognized as high-value inventions by the corporation.

My overarching goal is to develop AI solutions that are reliable, robust, and scalable, while simultaneously optimizing data, computational, and energy efficiency. To achieve this, my research focuses on improved priors, efficient inference techniques for deep probabilistic models, and novel approaches to extremely efficient AI—from massive foundational models to ultra-low-latency edge AI. Specifically, my work is driven by three core pillars:

-

Reliable AI (Uncertainty Quantification): Grounded in deep probabilistic modeling, I develop systems that “know what they don’t know.” By leveraging improved priors and principled inference, I focus on accurate uncertainty quantification to ensure safe, trustworthy decision-making in high-stakes applications.

-

Robust AI (Generalization & Robustness): Real-world deployments require models that can withstand noise and unpredictability. My research utilizes probabilistic frameworks to enhance model robustness against distributional shifts, out-of-distribution (OOD) data, and adversarial perturbations, ensuring consistent performance in dynamic environments.

-

Efficient AI (Resource Optimization): Dedicated to drastically reducing computational, memory, and energy footprints without sacrificing accuracy. I develop novel approaches—such as low-precision computing, scalable and efficient training and inference, and model compression, bridging the gap between massive foundation models and ultra-low-latency edge devices.

news

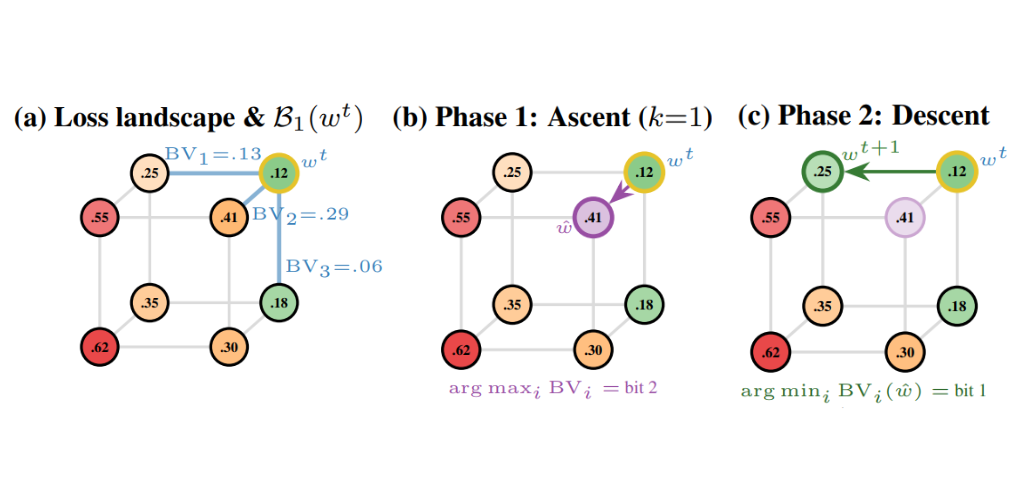

| May 24, 2026 | 🎉 My paper, ‘Sharpness-Aware Minimization Directly on the Boolean Hypercube’, has been accepted at ICML 2026 Workshop on Weight-Space Symmetries: from Foundations to Practical Application! |

|---|---|

| Apr 11, 2026 | 🚩 I will serve as an Area Chair for NeurIPS 2026. |

| Apr 10, 2026 | 🏆 I am honored to receive the Huawei PRC President Award for Outstanding Contribution to Patents! |

| Mar 30, 2026 | 🎖️ I am honored to receive the R&D President Award for R&D Delivery Technology Breakthrough Contribution! |

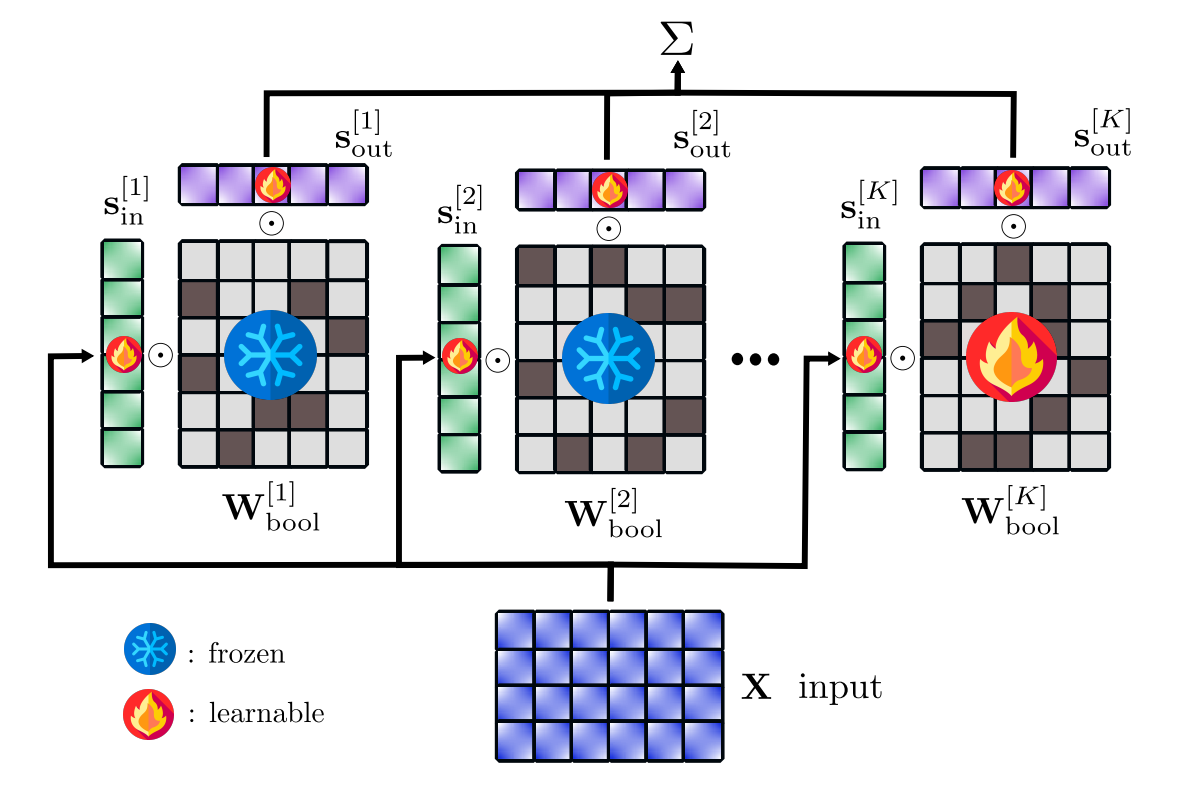

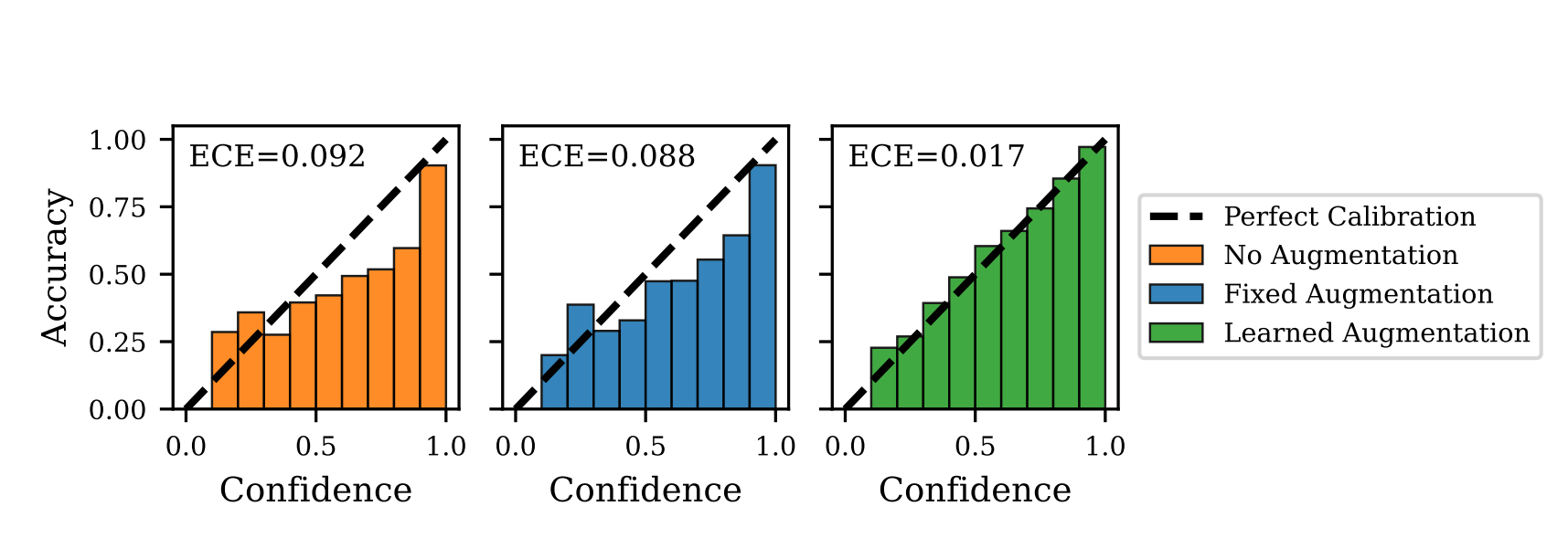

| Jan 26, 2026 | 🎉 Our papers ‘Highly Efficient and Effective LLMs with Multi-Boolean Architectures’ and ‘Optimizing Data Augmentation through Bayesian Model Selection’ have been accepted at ICLR 2026! |

| Dec 10, 2025 | 🎖️ I have been recognized as a ‘Top Reviewer for the NeurIPS 2025 conference’! |

| Dec 09, 2025 | 🏆 I am honored to receive the Corporate-level Huawei Quality Star and Future Star for Outstanding Performance, Breakthroughs, and Exceptional Quality! |

| Oct 14, 2025 | 🚀 Check out our new paper: ‘Universal Adaptive Environment Discovery’! |

| Jun 16, 2025 | 🎉 Our paper ‘Ultra-Efficient and Effective Large Language Models with Multi-Boolean Architectures’ has been accepted at ICML Workshop on Efficient Systems for Foundation Models and workshop on Machine Learning for Wireless Communication and Networks 2025! |

| May 28, 2025 | 🚀 Check out our new paper: ‘Highly Efficient and Effective LLMs with Multi-Boolean Architectures’! |

| May 27, 2025 | 🚀 Check out our new paper: ‘Optimizing Data Augmentation through Bayesian Model Selection’! |

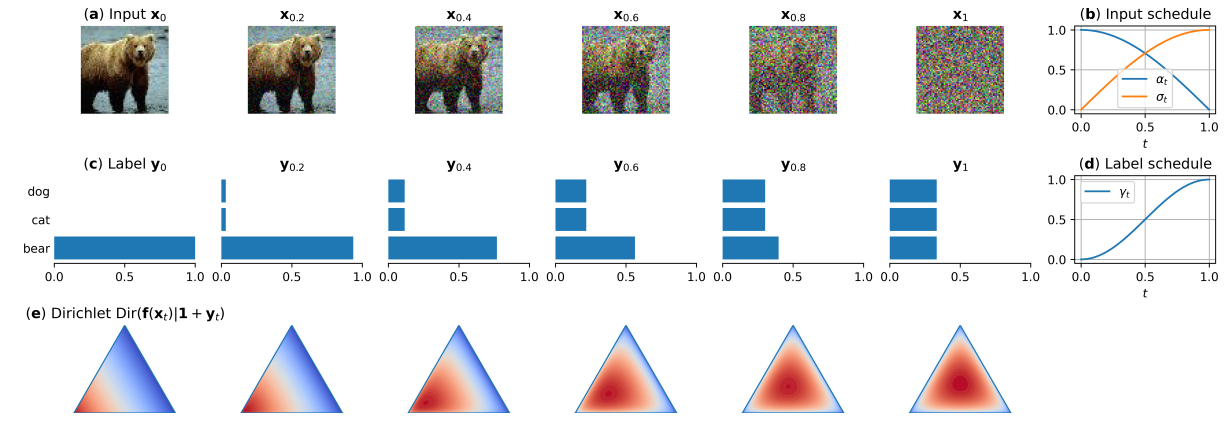

| Jan 22, 2025 | 🎉 Our paper ‘Robust Classification by Coupling Data Mollification with Label Smoothing’ has been accepted at AISTATS 2025! |

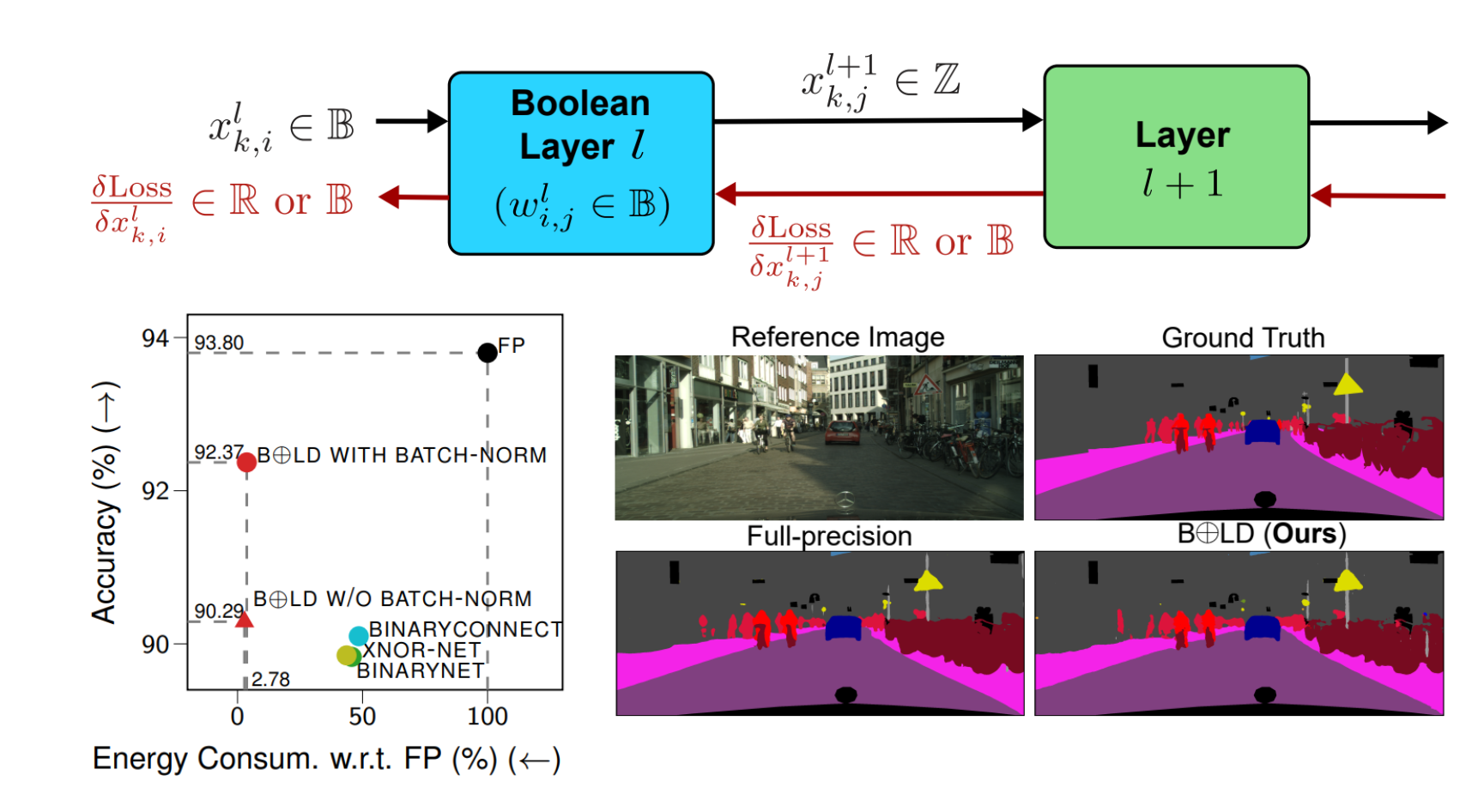

| Sep 26, 2024 | 🎉 Our paper ‘BOLD: Boolean Logic Deep Learning’ has been accepted at NeurIPS 2024! |

| Jun 19, 2024 | 🎉 Our paper ‘Boolean Logic for Low-Energy Deep Learning’ has been accepted at ICML Workshop on Advancing Neural Network Training: Computational Efficiency, Scalability, and Resource Optimization 2024! |

| Jun 03, 2024 | 🚀 Check out our new paper: ‘Robust Classification by Coupling Data Mollification with Label Smoothing’! |

| May 25, 2024 | 🚀 Check out our new paper: ‘BOLD: Boolean Logic Deep Learning’! |

| Apr 11, 2024 | 🎉 Our paper ‘Spatial Bayesian Neural Networks’ has been accepted in the Spatial Statistics journal! |

| Dec 22, 2023 | 🚩 I have joined Huawei Paris research center as a research scientist in machine learning |

| Dec 06, 2023 | 🏆 I have received the 1st prize of ‘Prix de thèse de l’EDITE 2023’ (Best PhD thesis award) from Sorbonne University! |

| Oct 13, 2023 | 🎓 I have defended successfully my PhD thesis! The members of the jury include Chris Oates, Mark van der Wilk, Marco Lorenzi, Serena Villata, Pietro Michiardi, and Maurizio Filippone. |

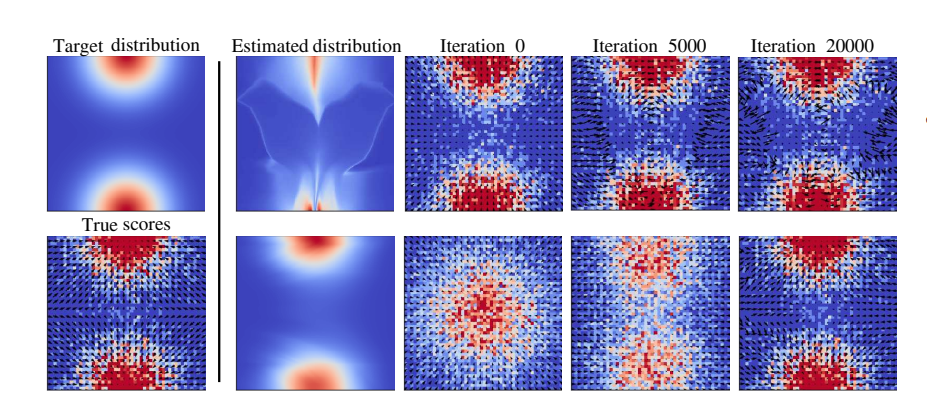

| Sep 21, 2023 | 🎉 Our paper ‘One-Line-of-Code Data Mollification Improves Optimization of Likelihood-based Generative Models’ has been accepted at NeurIPS 2023! |

| Sep 20, 2023 | ✈️ I will be attending and presenting a poster at the workshop ‘Generative models and uncertainty quantification’, GenU 2023. |

| Jun 20, 2023 | 🎉 Our paper ‘Improving Training of Likelihood-based Generative Models with Gaussian Homotopy’ has been accepted at ICML Workshop on Structured Probabilistic Inference and Generative Modeling 2023! |

| May 31, 2023 | 🚀 Check out our new paper: ‘One-Line-of-Code Data Mollification Improves Optimization of Likelihood-based Generative Models’! |

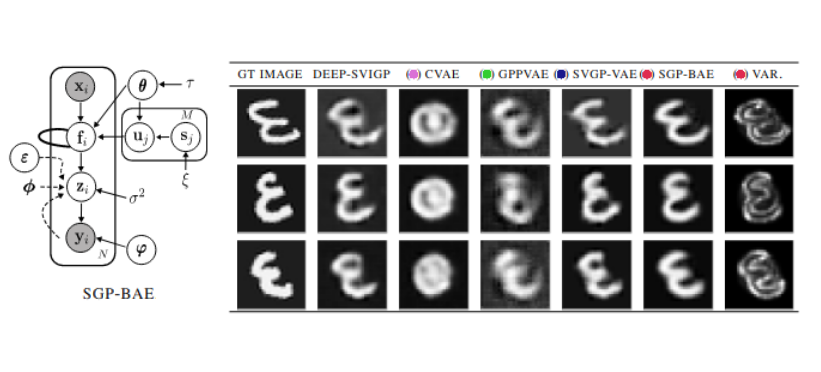

| Apr 24, 2023 | 🎉 Our paper ‘Fully Bayesian Autoencoders with Latent Sparse Gaussian Processes’ has been accepted at ICML 2023! |

| Feb 09, 2023 | 🚀 Check out our new paper: ‘Fully Bayesian Autoencoders with Latent Sparse Gaussian Processes’! |

| Nov 30, 2022 | ✈️ I will be attending the NeurIPS conference and presenting our JMLR paper. |

| Oct 01, 2022 | 💡 I’m visiting the groups of Professors Stephan Mandt and Babak Shahbaba at UCI for three months. |

| May 09, 2022 | ✈️ I’ve had a California trip during April 26th ~ May 8th to visit and give talks at Ermon’s lab (Stanford) and Mandt’s lab (UCI). |

| Apr 01, 2022 | 🎉 Accepted for a contributed talk at Joint Statistical Meetings (JSM) 2022 on ‘Functional Priors for Bayesian Deep Learning’. |

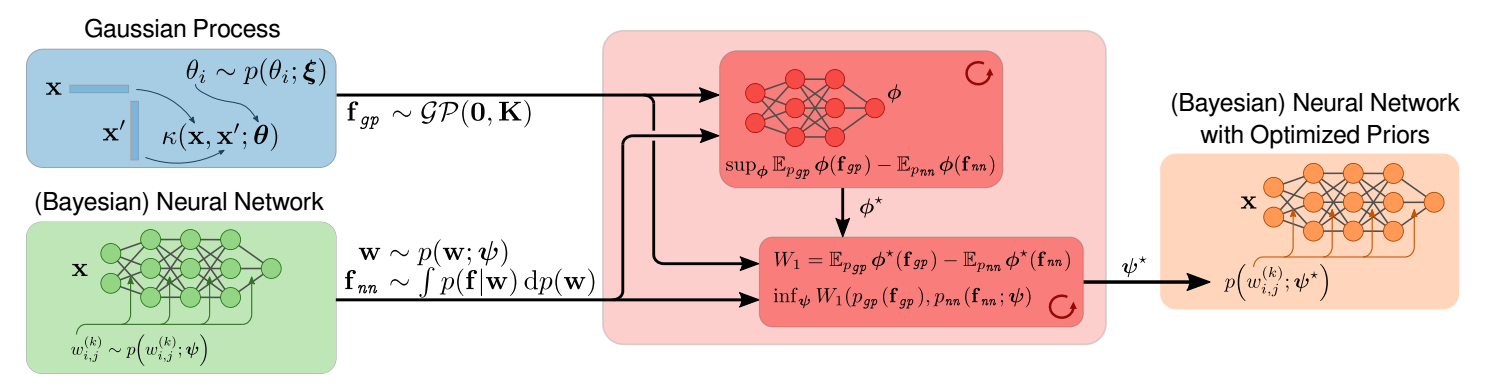

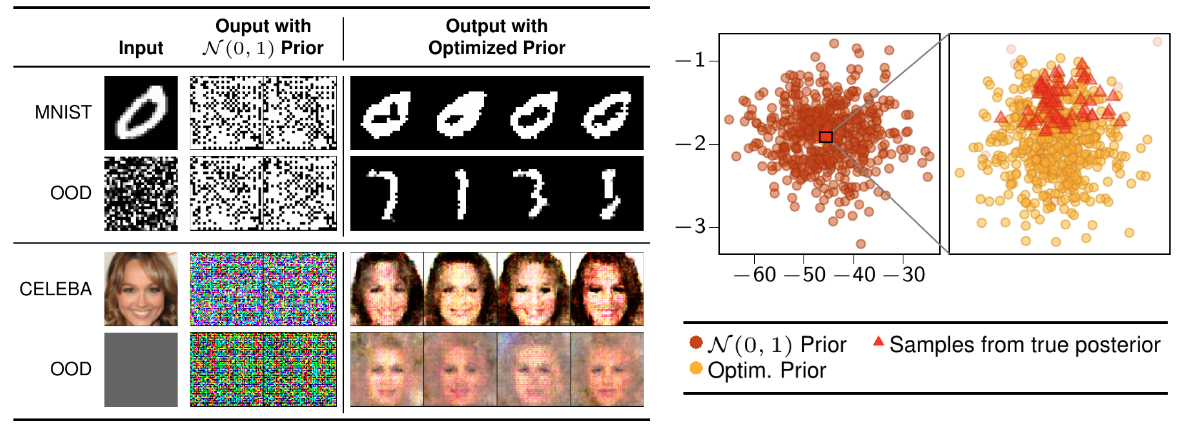

| Mar 25, 2022 | 🎉 Our paper ‘All You Need is a Good Functional Prior for Bayesian Deep Learning’ has been accepted by JMLR! |

| Mar 24, 2022 | 🏅 Received an ISBA Travel Award for Young Researchers. |

| Mar 21, 2022 | 💡 Invited talk at the SIAM Conference on Imaging Science (IS22), organized by Society for Industrial and Applied Mathematics. |

| Mar 01, 2022 | 🎉 Accepted for a contributed talk at ISBA 2022 World Meeting on ‘Functional Priors for Bayesian Deep Learning’. |

| Sep 28, 2021 | 🎉 Our paper ‘Model Selection for Bayesian Autoencoders’ has been accepted at NeurIPS 2021! |

| Sep 01, 2021 | 📍 Invited to serve as a reviewer (for the first time) for AISTATS. |

| Jun 11, 2021 | 🚀 Check out our new paper: ‘Model Selection for Bayesian Autoencoders’! |

| Feb 17, 2021 | 💡 Invited talk at the Data Centric Engineering group, Alan Turing Institute, UK (virtual): ‘Functional priors for Bayesian neural networks’. |

| Jan 12, 2021 | 🎉 Our paper ‘Functional Priors for Bayesian Neural Networks through Wasserstein Distance Minimization to Gaussian Processes’ has been accepted at the 3rd Symposium on Advances in Approximate Bayesian Inference (AABI) 2021! |

| Nov 26, 2020 | 🚀 Check out our new paper: ‘All You Need is a Good Functional Prior for Bayesian Deep Learning’! |

| Sep 14, 2020 | 🎓 I have defended successfully my master thesis at Telecom Paris and EURECOM. |

| Sep 01, 2020 | 🚩 I have joined EURECOM and Sorbonne University as a PhD student in Machine Learning. |